When Heat Reveals What Eyes Cannot See

Building a CNN-XGBoost Hybrid Model for Breast Thermal Image Classification

In 2020, Indonesia reported 68,858 new breast cancer cases—accounting for 16.6% of all cancer diagnoses in the country. Behind each statistic is a story of a mother, daughter, sister, or friend. Early detection can mean the difference between life and death, yet effective screening programs remain out of reach for many.

This reality drove me to explore an unconventional approach: what if we could detect early signs of breast cancer by simply reading heat patterns?

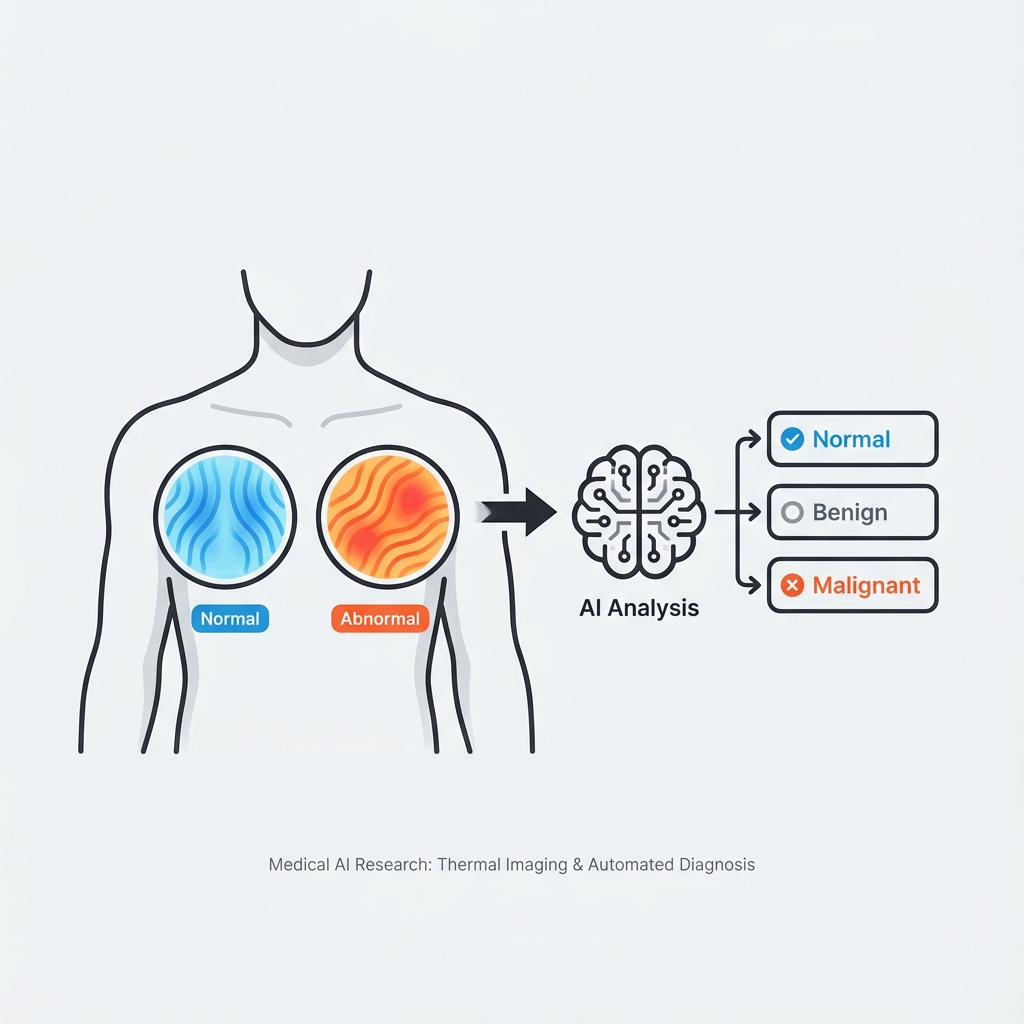

The Silent Language of Heat

Every cell in our body radiates heat. Cancerous cells, with their accelerated metabolism and increased blood flow, radiate slightly more. This temperature differential—invisible to the naked eye—creates a thermal signature that can be captured by infrared cameras.

Traditional detection methods like mammography and biopsy, while accurate, involve physical contact, radiation exposure, or invasive procedures. Thermography offers something different: a completely non-invasive, radiation-free way to detect thermal anomalies that might indicate underlying issues.

The Challenge: Beyond Binary

Most existing research attempted to classify thermal images into just two categories: normal or abnormal. But clinical reality is more nuanced. A patient with a benign condition requires different care than one with malignancy. I wanted to push the boundaries.

Research Objective

Develop a deep learning model capable of classifying breast thermal images into three distinct categories:

- Normal Healthy breast tissue with symmetrical thermal patterns

- Benign Abnormal but non-cancerous conditions

- Malignant Cancerous tissue requiring immediate attention

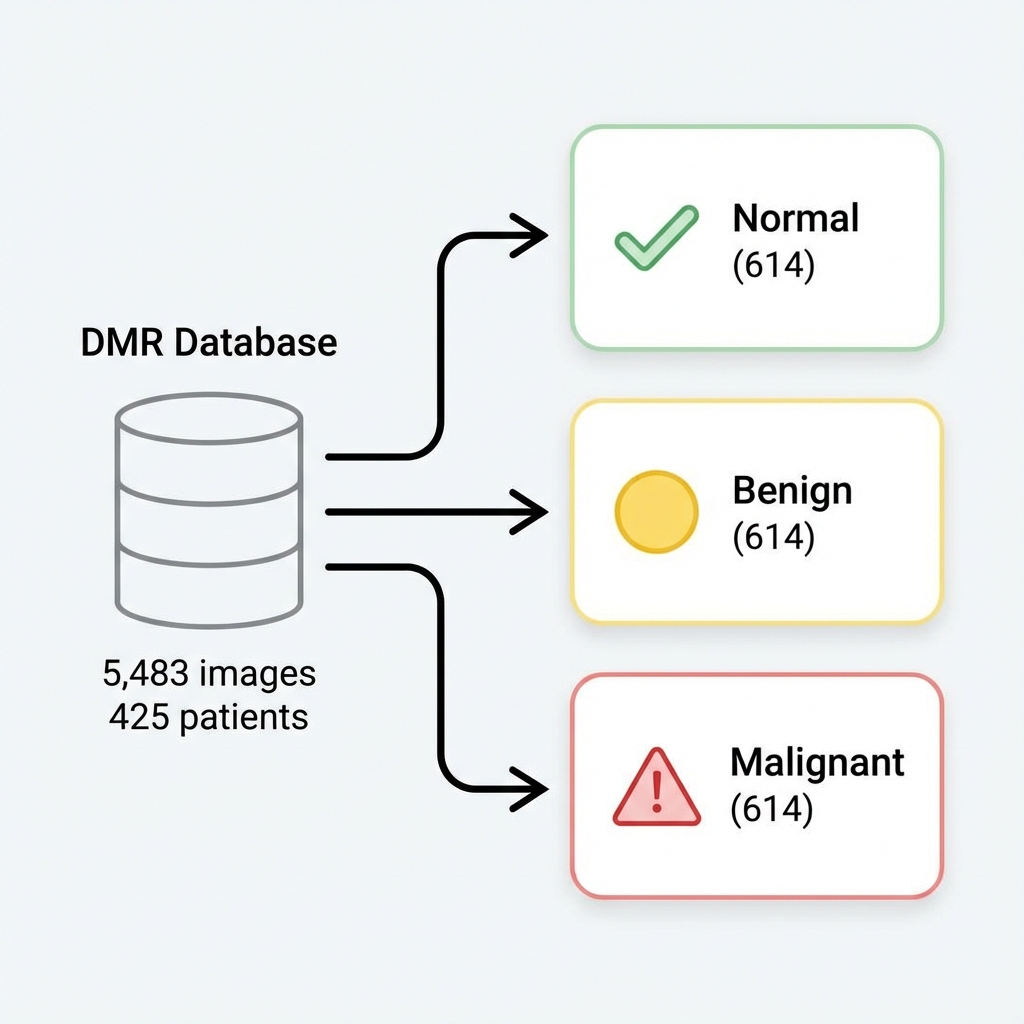

Curating the Dataset

Quality data is the foundation of any machine learning project. I worked with the Database for Mastology Research (DMR) from Visual Lab—a publicly available dataset containing thermal images from real patients.

The original dataset categorized images as simply "Normal" or "Abnormal." To achieve three-class classification, I developed a statistical reclassification approach:

- Analyzed temperature distribution histograms using Python

- Calculated mean temperature deviation between left and right breasts

- Cross-referenced with medical records including mammography results and biopsy outcomes

Final Dataset Distribution

| Split | Normal | Benign | Malignant | Total |

|---|---|---|---|---|

| Training | 430 | 430 | 430 | 1,290 |

| Validation | 123 | 123 | 123 | 369 |

| Testing | 61 | 61 | 61 | 183 |

| Total | 614 | 614 | 614 | 1,842 |

A perfectly balanced dataset ensures the model doesn't develop bias toward any particular class.

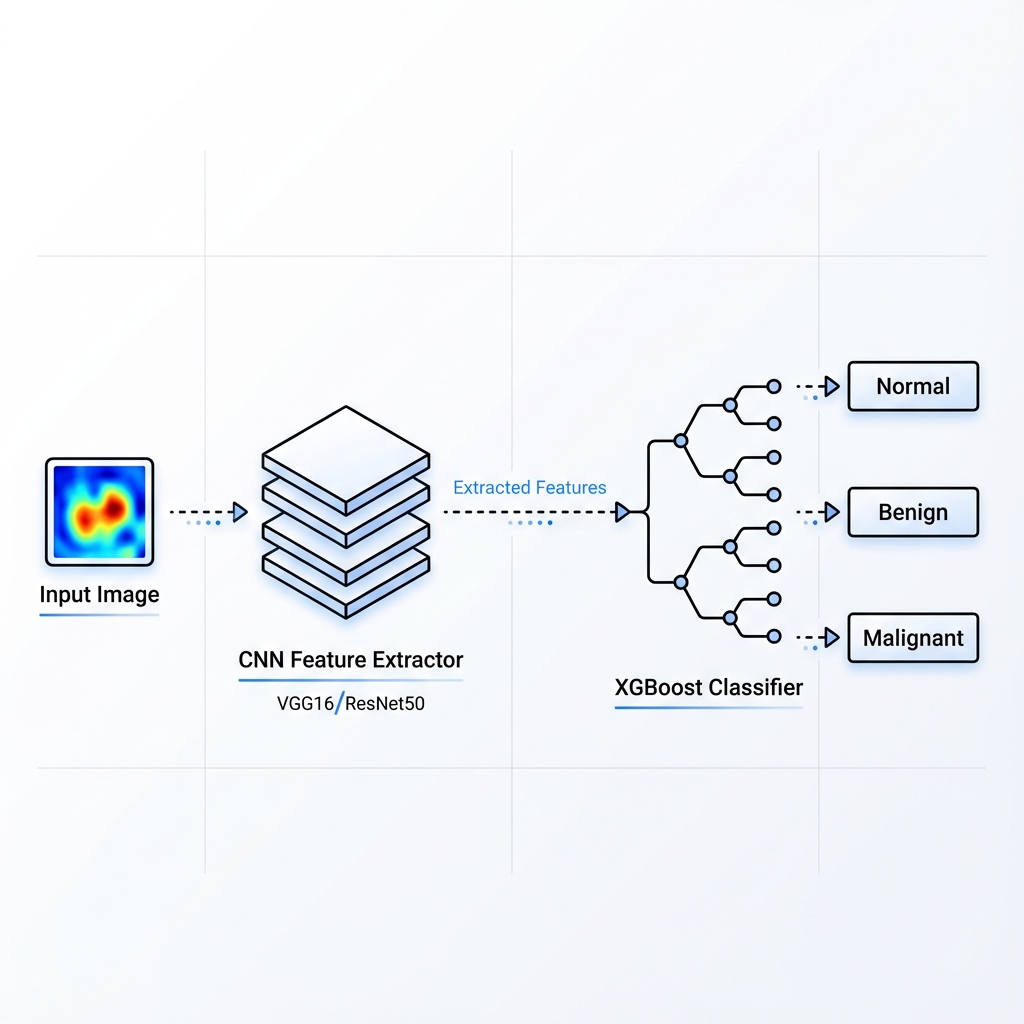

The Hybrid Architecture

The core innovation lies in combining two powerful paradigms: the feature extraction prowess of Convolutional Neural Networks with the classification strength of XGBoost ensemble learning.

CNN Feature Extractor

I experimented with two pre-trained architectures:

- VGG-16: A classic architecture with 16 layers, known for its simplicity and effectiveness

- ResNet-50: Features residual blocks with skip connections that help prevent the vanishing gradient problem

The CNN component consists of 5 key layers: Input → Convolution → Pooling → Reshape → Fully-connected features.

XGBoost Classifier

Instead of using traditional softmax classification, I replaced the final layers with an XGBoost ensemble:

- Additive tree-boosting method

- 10x faster than Random Forest

- Handles sparse data efficiently

- Provides feature importance scores for explainability

Results: The Numbers Tell a Story

After extensive training and hyperparameter tuning, both model variants showed promising performance:

VGG16-XGBoost

StableConsistent performance with minimal overfitting. The model correctly classified most Normal cases but showed some confusion between Benign and Malignant classes.

ResNet50-XGBoost

BestHigher overall accuracy, though the gap between training and validation indicates some overfitting. The deeper architecture captured more nuanced features.

Understanding the Confusion Matrix

The confusion matrices revealed interesting patterns. Both models excelled at identifying Normal cases (61 correct predictions). The challenge lay in distinguishing between Benign and Malignant—a clinically significant distinction that even human experts sometimes struggle with.

Key Findings

- The ResNet50 variant's skip connections helped preserve gradient information, leading to better feature extraction

- Temperature asymmetry proved to be a reliable biomarker for initial classification

- XGBoost's ensemble approach provided more robust classification than traditional softmax layers

What This Research Taught Me

Hybrid Models Have Merit

Combining CNN feature extraction with ensemble classifiers can outperform end-to-end approaches for certain medical imaging tasks.

Transfer Learning Works

Pre-trained ImageNet weights transfer surprisingly well to thermal imagery—despite the dramatic domain difference.

Data Quality Matters Most

The careful statistical reclassification of the dataset was crucial. Garbage in, garbage out applies doubly in medical AI.

Explainability is Essential

XGBoost's feature importance scores provide transparency that's critical for clinical adoption.

Looking Forward

This research opens several exciting avenues for future exploration:

- Attention Mechanisms: Implementing Grad-CAM or attention layers to visualize which regions the model focuses on

- Multi-modal Fusion: Combining thermal data with clinical features for improved accuracy

- Larger Datasets: Collaborating with hospitals to gather more diverse thermal imaging data

- Clinical Validation: Working with medical experts to validate the approach in real screening scenarios

Resources

Acknowledgments

This research was conducted at Universitas Syiah Kuala under the supervision of Prof. Dr. Ir. Roslidar, S.T., M.Sc., IPM., ASEAN Eng. Special thanks to my co-authors Khairun Saddami and Essy Harnelly for their invaluable contributions.

The work was supported by the Institute for Research and Community Services (LPPM) Universitas Syiah Kuala under grant number 251/UN11.2.1/PG.01.03/SPK/PTNBH/2024.

If you're working on medical AI or thermal imaging research, I'd love to connect. Let's push the boundaries of what's possible in healthcare AI together.